AI Engineering Management: How to Gain Visibility Without Micromanaging Your Team

Learn how modern AI engineering management works in 2026. Discover how to gain visibility into AI teams without micromanaging developers.

AI engineering management is becoming one of the hardest challenges for CTOs and engineering leaders in 2026. AI teams are moving faster than ever.

AI-assisted coding tools can multiply developer productivity by 5x or even 10x. Engineers can generate code, iterate on models, and deploy prototypes in a fraction of the time it took just a few years ago.

The faster your AI team moves, the less visibility you actually have. This acceleration is exciting, but it creates a serious problem for modern AI engineering management: How do you maintain visibility over AI projects without slowing your team down?

Many CTOs and AI leaders are discovering that traditional engineering management methods simply do not scale in the era of AI-assisted development. Reviewing every pull request line by line, monitoring every commit, and constantly checking developer activity leads to one outcome: micromanagement.

And micromanagement is not just inefficient, it actively harms AI teams.

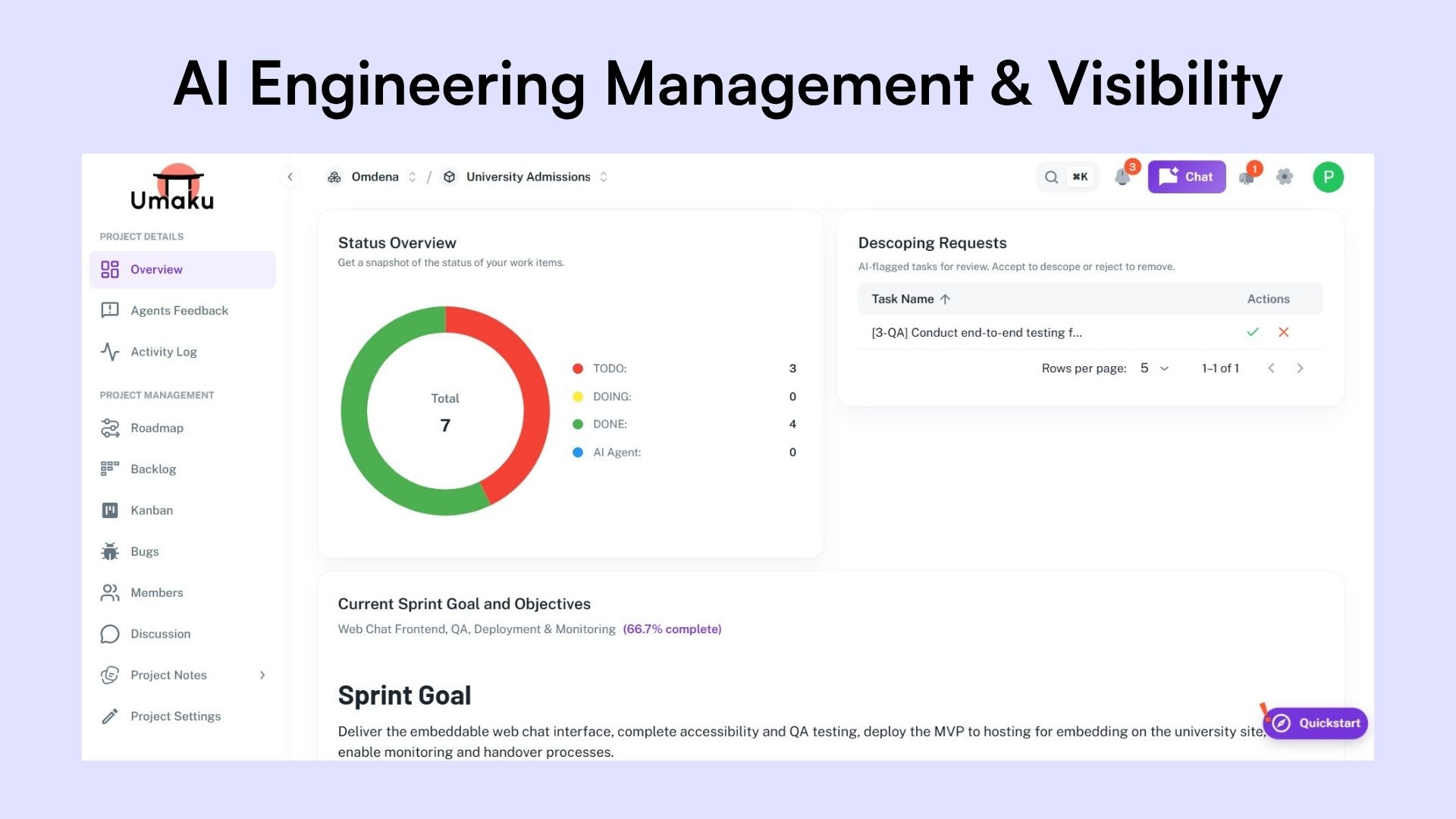

At Omdena, while managing large distributed AI teams, we faced this exact problem. The solution we developed was Umaku, a platform designed to provide deep project visibility while keeping teams autonomous.

This article breaks down a modern approach to AI engineering management and shows how leaders can gain real visibility without becoming a bottleneck.

Why Traditional Engineering Management Breaks at Scale

Traditional engineering management assumes a predictable workflow: developers write code, open pull requests, and managers review changes line by line. This worked when development velocity was slower and changes were smaller.

Today, AI-assisted development has changed the equation. Engineers can generate significantly more code in less time, making manual review processes a bottleneck.

But the challenge goes beyond speed.

AI engineering is fundamentally different from traditional software development. The complexity lies in data, experimentation, and model behavior—not just code. Without proper context, reviewing code rarely reveals whether the work aligns with project goals.

This creates three core problems:

- Leaders cannot realistically review all the code being produced

- Generic AI code review tools lack project context

- Many AI engineers come from research backgrounds, creating DevOps and integration gaps

The result is a management paradox: leaders need visibility, but traditional methods slow teams down.

To understand why this gap exists, it’s important to first define what AI engineering management actually involves.

What Is AI Engineering Management?

AI engineering management is the practice of overseeing the development, deployment, and performance of AI systems across the entire lifecycle. Unlike traditional software management, it involves coordinating code, data pipelines, and machine learning models within fast-moving experimentation cycles.

Leaders must manage not just application logic, but also data quality, model behavior, and continuous iteration. This introduces additional complexity in areas like versioning, reproducibility, and infrastructure.

Modern AI engineering management also requires strong DevOps practices, including CI/CD for models, monitoring, and scalable deployment. The goal is to maintain visibility and alignment across systems without slowing down innovation.

This added complexity makes one thing clear: traditional management approaches are no longer enough. To manage AI systems effectively, leaders need to rethink how visibility is created in the first place.

The Core Principle of Modern AI Engineering Management: Context Over Control

The key shift is simple but powerful:

Visibility should come from structured context, not manual inspection.

Rather than watching every line of code, leaders need systems that understand the broader project environment:

- The project goals.

- The technical architecture.

- The sprint objectives.

- The code is being written.

When those pieces are connected, AI agents can analyze work automatically and provide meaningful insights.

This is the core idea behind how we use Umaku

Leaders don’t need more dashboards or more manual reviews. What they need is a structured way to understand what is happening inside their AI projects without constantly interrupting the team.

At Umaku, we approach this challenge through a set of visibility layers. Each layer focuses on a different level of the engineering process, from project context to team performance, allowing leaders to gain clarity, detect risks early, and guide the project without falling into micromanagement.

Layer 1: Project Context in AI Engineering Management

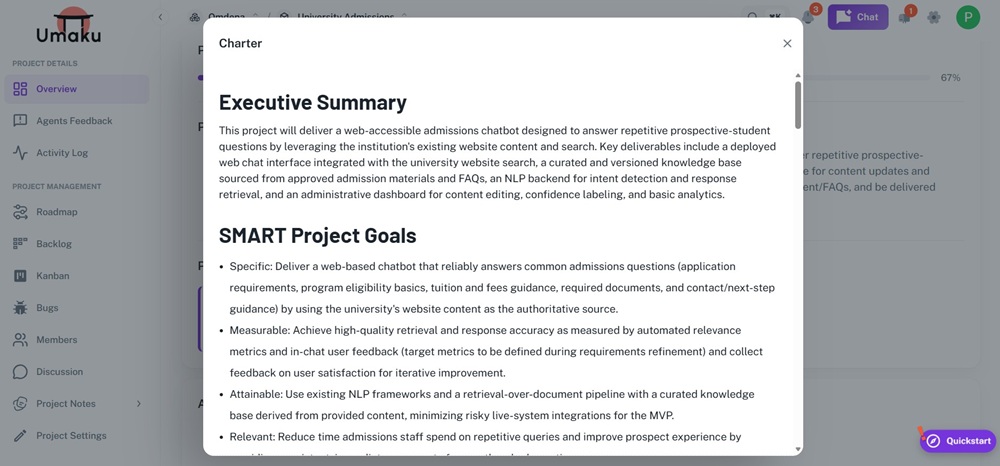

Project Charter in Umaku

The first step to avoiding micromanagement is establishing a clear project context.

Every project in Umaku begins with three essential inputs:

- Project Charter

This includes the project goals, scope, business logic, and expected outcomes. - Tech Stack Definition

The architecture of the system, dependencies, integrations, and services involved. - Source Repositories and Documentation

Links to GitHub repositories and related resources.

These elements give the platform the same understanding that a human engineering lead would normally have.

Why does this matter?

Because context allows automated systems to evaluate work intelligently. Without it, AI tools can only perform shallow code checks.

With it, they can reason about whether the implementation actually supports the product goals.

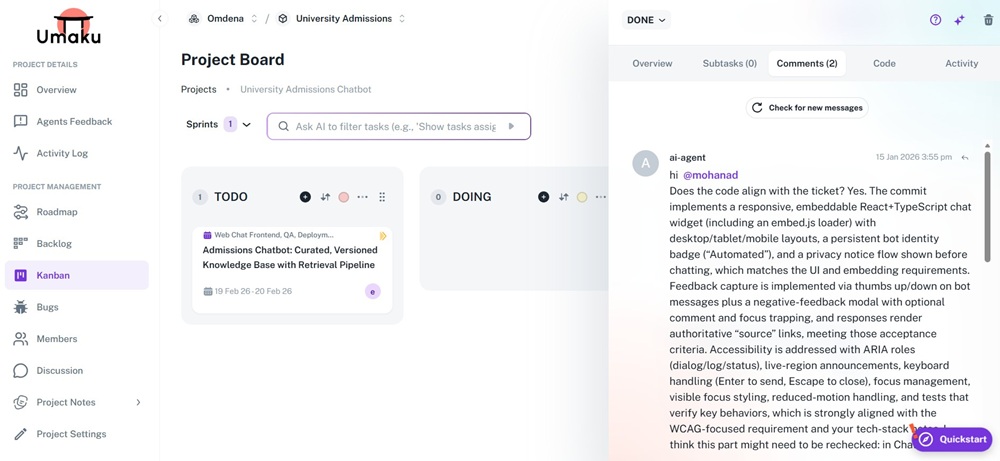

Layer 2: Ticket-Level Visibility Without Manual Code Reviews

Agent Feedback in Umaku

Once a project is defined, work is organized into sprints and tickets.

Developers complete tasks and attach the commit URL associated with their implementation. Instead of manually reviewing the code, engineering managers can trigger an AI review directly from the platform. This is where visibility begins to scale.

The AI agent evaluates the code using multiple contextual inputs: project charter, tech stack and ticket description.

The result is a detailed analysis explaining whether the implementation matches the ticket, how the code functions, and potential issues or improvements

For a team leader, this provides three immediate advantages:

- You understand what was implemented without reading every line of code.

- You gain confidence that the work aligns with the project goals.

- You can identify deviations early without interrupting the developer workflow.

This approach replaces constant monitoring with on‑demand insight.

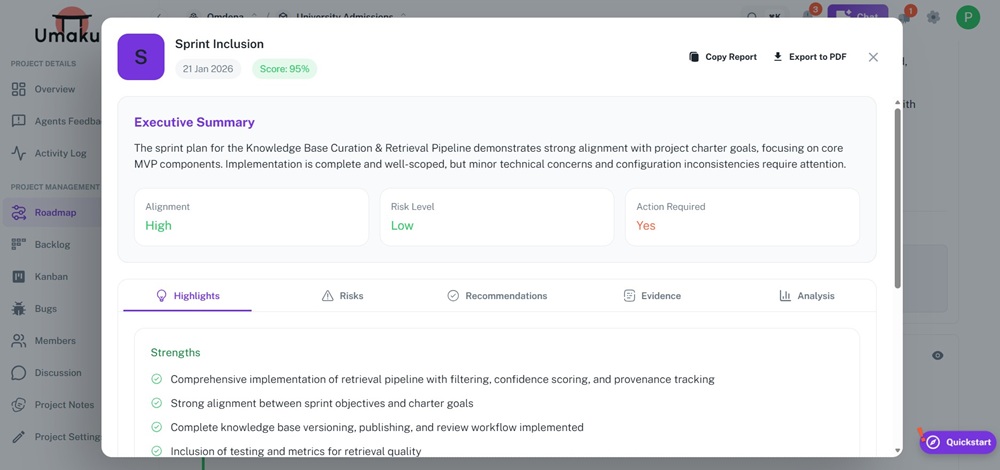

Layer 3: Sprint-Level Visibility Instead of Micromanagement

Sprint Inclusion Report in Umaku

Micromanagement often happens when leaders track developers individually rather than observing system outcomes.

A more effective strategy is to evaluate the health of the sprint itself.

At the end of each sprint, Umaku runs a set of deep research agents that analyze the entire development cycle.

These agents review: ticket alignment with project goals, implementation quality, integration risks, and architectural consistency.

Instead of checking scattered pieces of work, leaders receive a structured report that explains what actually happened during the sprint. This provides the level of visibility leaders need without interrupting the team’s workflow.

Layer 4: DevOps and AI Engineering Governance

DevOps Compliance Report in Umaku

AI teams often struggle with production readiness. Many strong data scientists are experts in modeling and experimentation but may have limited experience with packaging, infrastructure, or deployment pipelines.

To address this, Umaku includes specialized agents focused on DevOps compliance and AI engineering practices.

These agents check for issues such as: missing CI/CD pipelines, absent infrastructure‑as‑code, incorrect model packaging, poor artifact management, or a lack of version control for models.

The key advantage is that these checks are context‑aware. This targeted evaluation prevents technical debt without forcing leaders to manually audit the system.

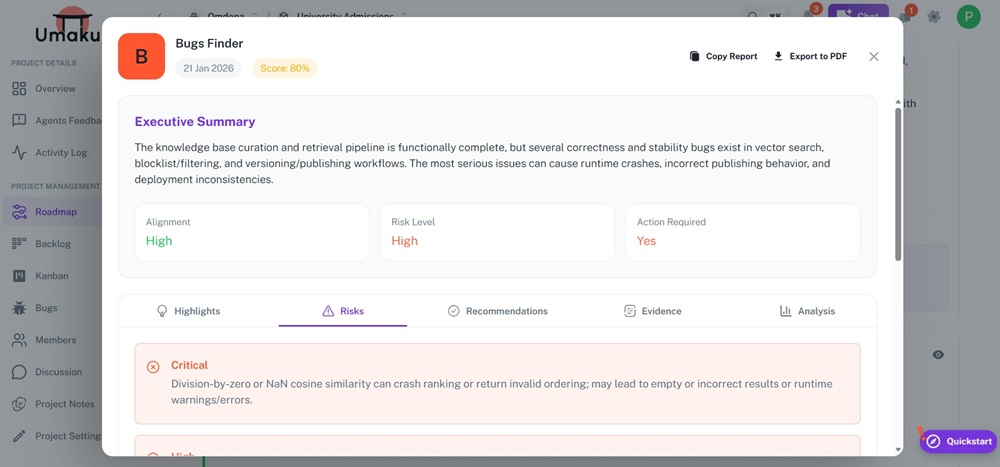

Layer 5: Automated Bug Discovery and Feedback Loops

Bug Finder Report in Umaku

Another major source of micromanagement is quality assurance. Managers often feel the need to manually verify code because they lack reliable feedback mechanisms.

In Umaku, bug‑detection agents analyze the codebase semantically and identify potential issues. These reports include: the location of the bug, the impact on the system, and a suggested fix.

From the report, leaders can automatically create a ticket assigned to the relevant developer.

Once the issue is fixed, the agent verifies whether the problem was truly resolved.

This creates a closed feedback loop where the system continuously improves code quality without requiring constant oversight.

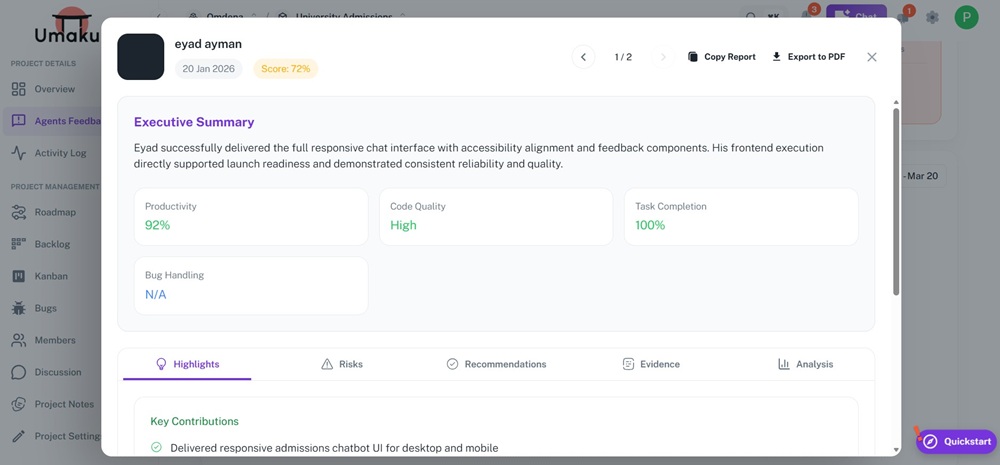

Layer 6: AI Engineering Team Analytics and Performance Insights

Team Performance Report in Umaku

Finally, leaders need a strategic view of team performance.

Instead of tracking individuals minute by minute, Umaku aggregates insights such as: tasks assigned vs. completed, code contributions, workload distribution, and productivity trend.

These analytics highlight potential issues such as overloaded engineers, delayed tasks, or uneven sprint contributions.

Again, the focus is on visibility, not surveillance.

Traditional vs Modern AI Engineering Management

AI is reshaping how engineering teams operate. As AI becomes embedded across the software lifecycle, management is shifting from manual oversight to intelligent, system-driven visibility.

| Traditional | Modern |

| Manual code reviews | AI-assisted code analysis |

| Developer monitoring | System-level visibility |

| Reactive debugging | Predictive monitoring & auto-remediation |

| Siloed DevOps & MLOps | Unified AI + DevOps workflows |

| Periodic checks | Continuous, real-time insights |

Modern AI engineering management focuses on systems, automation, and context—allowing teams to move faster without sacrificing control.

Stop Managing Developers. Start Managing Systems.

AI engineering management is no longer about controlling developers—it’s about designing systems that create visibility without friction.

Micromanagement happens when leaders try to supervise people directly. High-performing AI teams operate differently. When project context, task tracking, code analysis, and team analytics are connected, leaders gain clarity without interrupting workflows.

Developers stay autonomous. Managers stay informed. Teams move faster.

As AI development accelerates, manual oversight becomes a bottleneck. Modern AI engineering management relies on structured, context-driven visibility instead of constant intervention.

Platforms like Umaku enable this shift—helping teams scale without sacrificing speed or control.

If you want to scale AI teams without slowing them down, the solution is not more oversight—it’s better visibility systems.

👉 You can sign up for Umaku to see how this works in practice.

- What is AI engineering management?AI engineering management is the practice of overseeing the development, deployment, and performance of AI systems. It involves managing code, data pipelines, and machine learning models while ensuring alignment with business goals, scalability, and system reliability.

- Why is traditional engineering management not effective for AI teams?Traditional engineering management relies on manual code reviews and developer monitoring, which do not scale in AI environments. AI teams move faster, generate more code, and work with complex systems like models and data pipelines, making manual oversight inefficient and often counterproductive.

- How can you manage AI teams without micromanaging them?You can manage AI teams effectively by focusing on system-level visibility instead of individual monitoring. This includes using tools that provide insights into project context, code quality, sprint progress, and team performance without interrupting developer workflows.

- What tools help with AI engineering management?Modern AI engineering management tools like Umaku provide context-aware visibility across projects. These platforms use AI to analyze code, track progress, detect issues, and generate insights, helping leaders make informed decisions without relying on manual reviews.