Save Your AI Project from Failing in Production: The Case for Context-Aware Code Review

Context-aware code review ensures code aligns with business intent—not just tests. Learn how to prevent semantic drift in DevOps.

Software teams today rely on automated tools, microservices, and AI coding assistants to release features quickly. But as development speeds up, an important problem often goes unnoticed. Most checks in the pipeline confirm that code runs correctly from a technical point of view. They do not confirm that the code truly follows the original business requirements.

That is where context-aware code review becomes essential. It goes beyond passing QA tests and verifies that code aligns with user stories, acceptance criteria, and real business goals.

A QA test may turn green, yet the implementation can still violate critical business rules. Code can compile, deploy, and still be wrong. In this article, we explore why this gap exists, how modern workflows contribute to it, and how teams can adopt context-aware code review to prevent semantic drift and avoid expensive production mistakes.

What Is Context-Aware Code Review?

Context-aware code review is a method of checking code that goes beyond syntax, tests, and formatting. Instead of asking only “Does this run?”, it asks “Does this solve the right problem?” It evaluates whether code truly matches the intent expressed in requirements, acceptance criteria, user stories, and business rules. This type of review can be done by humans, tools, or a combination of both, and it focuses on semantic validation—understanding meaning, not just mechanics.

Traditional code reviews and automated tests confirm that code behaves as expected in controlled scenarios. But behaving as expected is not the same as behaving correctly in the real business context. When intent is not explicitly validated, teams can gain a false sense of confidence. Everything looks healthy on the surface—builds pass, tests turn green, approvals move forward. Yet critical logic gaps remain hidden.

This false confidence is what creates the illusion of correctness in modern code reviews.

The Illusion of Correctness in Modern Code Reviews

Modern teams rely on linters, unit tests, integration tests, and pull request reviews. The process looks thorough. Builds pass. Tests turn green. Approvals move forward.

Yet business logic errors still reach production.

The reason is simple: most review systems validate behavior, not intent. They check whether the code runs as written, not whether it reflects the original business requirement. This creates an illusion of correctness—everything appears fine, but misalignment remains hidden.

Where the gap appears:

- Unit tests confirm that the code behaves as the developer expects. If the understanding of the requirement is flawed, the tests reinforce that flaw.

- Integration tests verify that systems communicate correctly. They do not check whether the interaction fulfills a business rule.

- PR reviews focus on readability and structure. They rarely re-evaluate the deeper intent behind the change.

When confidence in technical checks replaces validation of intent, the problem goes deeper than individual mistakes. It becomes structural. The issue is not that developers ignore requirements; it is that modern workflows make it easy for intent to drift away from implementation.

This is what we call the context gap.

The Context Gap in Traditional Development

The context gap does not happen because developers are careless. It emerges from how modern software teams operate. Even well-structured workflows can unintentionally separate implementation from intent. As systems grow more complex and AI tools become common, the risk of semantic drift increases.

Key contributors to the context gap include:

- Parallel development: Multiple engineers work on related features at the same time, often with partial visibility into evolving requirements.

- Task fragmentation: Small or ambiguous tickets hide the broader business objective behind isolated technical tasks.

- AI-generated code risks: AI assistants produce syntactically correct code from limited prompts, but prompt incompleteness can lead to missing constraints or edge cases.

- Time pressure: Tight release cycles reduce deep requirement validation, allowing logic misalignment to move forward unnoticed.

Together, these factors create a system where correctness is assumed, but intent is rarely verified. Let’s understand this with an example below.

Real Example: When Code Passes Tests but Violates Business Rules

Let’s see how the absence of context-aware code review creates real production risk.

Requirement

A product manager defines a clear rule:

- Accounts marked as FROZEN must not accrue interest.

- If an account is frozen at any time during the monthly period, the interest for that period must be zero.

Implementation

A developer writes the following function:

def apply_monthly_interest(account):

if account.status != "CLOSED":

interest = account.balance * 0.02

account.balance += interest

return account

Why it passes review

The logic is clean and readable. There are no syntax errors. A unit test may confirm that closed accounts are excluded. CI passes. The pull request gets approved.

Why it fails the business

The code never checks for the FROZEN status. It also ignores the “any time during the period” condition. Technically correct execution hides a deeper failure: business intent was never validated. This is semantic drift in action.

This example is not an isolated mistake. It is a predictable outcome of how most development pipelines are structured. The code passed every standard checkpoint because none of those checkpoints were designed to validate business intent. The failure was not in syntax, formatting, or system integration. The failure was in alignment.

To understand why this keeps happening, we need to examine how traditional DevOps pipelines are built.

Why Traditional DevOps Pipelines Miss Intent

Traditional pipelines validate syntax and integration. They do not validate intent.

Most CI/CD systems are designed to catch technical failures. They ensure code compiles, follows style rules, passes tests, and integrates correctly with other services. But they rarely verify whether the implementation aligns with the original business requirement.

A typical DevOps pipeline looks like this:

| Step | Layer | Function |

| 1 | Static Analysis | Catches syntax issues, style violations, and common security risks. |

| 2 | Unit & Integration Tests | Validates component behavior and system communication. |

| 3 | Human Review | Reviews structure, performance, and architectural decisions. |

Notice what is missing: a dedicated layer for intent verification.

Without context-aware code review, there is no structured checkpoint that compares implementation against user stories, acceptance criteria, or historical business rules. The pipeline confirms that the code works. It does not confirm that the code is right.

This is where a new layer becomes necessary—one focused on semantic validation and business alignment.

How Context-Aware Code Review Works

Context-aware code review adds a structured layer that verifies alignment between implementation and intent. Instead of evaluating code in isolation, it evaluates code in context.

At a high level, it works through four key checks:

- Cross-reference ticket and code: The system connects the commit or pull request to the original ticket, user story, or requirement document.

- Analyze acceptance criteria: It compares the implemented logic against defined edge cases and explicit constraints.

- Validate business rules: It ensures domain-specific conditions, exclusions, and time-based rules are reflected in the logic.

- Check historical patterns: It reviews similar past implementations to detect deviations from established business behavior.

This process introduces semantic validation into the pipeline. The goal is not to replace testing, but to verify that the implementation reflects the intended outcome.

Context-Aware Code Review in Action (Umaku)

To demonstrate this approach, we applied it using Umaku, our project management platform.

We began with a pull request that passed linters and unit tests. From a traditional CI/CD perspective, it was production-ready.

Production Ready Build

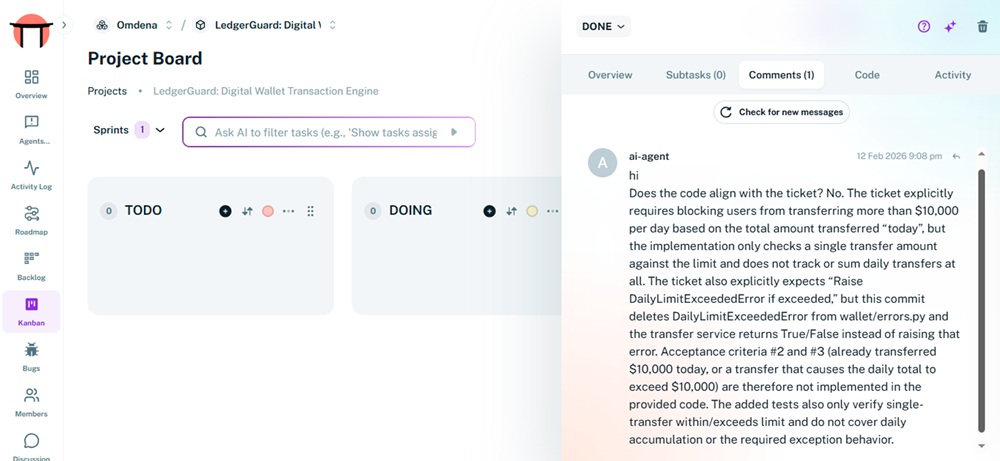

However, when we connected the commit URL to its corresponding ticket in Umaku, a deeper analysis began.

The system retrieved the user story, acceptance criteria, and Definition of Done. It then performed a semantic cross-check between the documented intent and the implemented logic.

Ticket Feedback in Umaku

Code Quality Feedback in Umaku

Overall Agentic Feedback in Umaku

Two outcomes became clear:

- Naive implementation: Flagged for missing business constraints described in the ticket.

- Context-aligned implementation: Confirmed that all acceptance criteria were reflected in the code logic.

This acted as a pre-flight check. Before human review, the system verified alignment between code and business rules. The result was not just syntactic confidence, but intent assurance.

Benefits of Context-Aware Code Review

Adding context-aware code review into your process does more than catch hidden bugs. It delivers measurable improvements across quality, predictability, and team confidence.

- Prevents business logic regressions: By validating intent against requirements and acceptance criteria, it stops domain-level errors before they reach production.

- Reduces production incidents: Fewer logic gaps mean fewer outages caused by mismatches between implementation and real-world rules.

- Speeds up PR approval cycles: Automated semantic checks reduce the back-and-forth between reviewers and authors, making reviews more efficient.

- Improves AI-generated code reliability: AI tools often miss nuances. Context-aware reviews ensure that generated code honors business constraints.

- Strengthens Definition of Done: Intent becomes a formal part of completion criteria, not an afterthought, making release quality more predictable.

Together, these benefits elevate quality far beyond what syntax checks alone can deliver.

From Code Correctness to Intent Alignment

Closing the context gap requires more than adding another tool to the pipeline. It requires redefining what “done” truly means. Technical correctness is the baseline. Business alignment is the standard. Without intent verification, even well-tested code can quietly introduce costly logic errors.

Engineering leaders can take practical steps:

- Mandate business intent in every ticket: Make the “why” explicit, not implied.

- Introduce a context checklist: Validate acceptance criteria and domain rules before approval.

- Add a pre-flight alignment layer: Run context-aware code review before human review to catch semantic drift early.

This is exactly where Umaku fits. Umaku connects tickets, acceptance criteria, and commits to perform semantic cross-checks automatically. It ensures your code does not just pass tests, but reflects business intent.

If you want to strengthen alignment in your DevOps pipeline and reduce logic-driven production issues, sign up for Umaku and see context-aware code review in action.

- What is context-aware code review?Context-aware code review is a method of validating code against business intent, acceptance criteria, and domain rules—not just syntax or test results. It ensures the implementation solves the right problem, not just that it runs correctly.

- How is context-aware code review different from traditional code review?Traditional code reviews focus on readability, structure, and test coverage. Context-aware code review adds semantic validation by comparing code against user stories, business rules, and historical requirements to ensure alignment.

- Why can code pass tests but still fail in production?Tests validate expected behavior based on assumptions made during development. If those assumptions are incomplete or incorrect, the tests may pass while the implementation violates real business logic.

- Is context-aware code review only needed for AI-generated code?No. While AI-generated code increases the risk of missing constraints, human-written code can also drift from business intent. Context-aware review applies to all code.

- Can context-aware code review replace human reviewers?No. It acts as a pre-flight alignment layer before human review. It strengthens the review process by verifying intent, allowing human reviewers to focus on architecture and strategy.